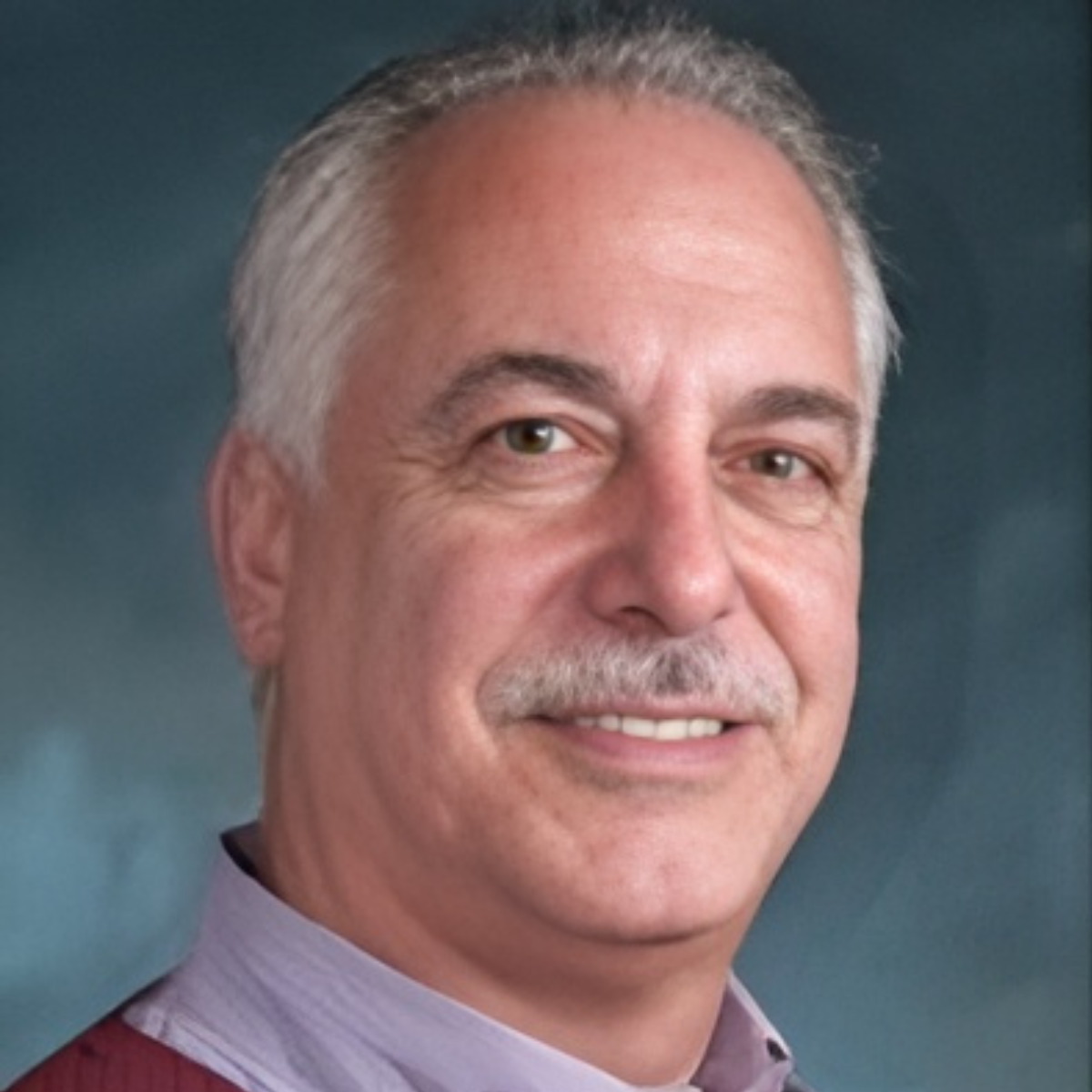

Stony Brook, NY, February 27, 2026 — In modern medicine, “AI” appears on every slide deck, from hospital boardrooms to pharmaceutical strategy meetings. Few people, however, spend as much time exploring that buzzword as Edward Reiner — a Stony Brook ‘77 alum whose career has followed healthcare’s shift from paper charts to terabytes of data and now, to large language models.

Reiner, who studied at Stony Brook before earning an MBA and moving into industry, is now an Advisor at Data Leaders Network, helping life science and healthcare organizations actually use AI in their day-to-day work. His focus is on health economics, epidemiology, and outcomes research — fields that rely on massive datasets to answer practical questions: does this drug work in the real world, and is it worth the cost?

“People talk about AI as if it’s magic,” he said. “What matters is whether it helps you make better decisions about real patients, real treatments, real systems.”

Reiner’s relationship with Stony Brook goes back to the 1970s, when he was an undergrad on a campus that looked very different from today. “A lot of the buildings were still going up,” he recalled. “There was mud everywhere, construction noise seeped into the halls. You had to watch where you stepped.” It was a far cry from today’s glass-walled offices and water displays, but it set him on a path that would eventually converge with some of the biggest shifts in healthcare technology.

After graduating from Stony Brook and completing his MBA, Reiner started out in publishing, handling Financial Operations at Simon & Schuster, McGraw-Hill, and Reed Elsevier. His move into healthcare came later, when he joined GE Healthcare’s IT business just as hospitals and medical practices were starting to adopt electronic medical records.

“At first, it was about getting information into the system,” he said. “But once you’ve captured years of diagnoses, lab results, and doctors’ notes, the obvious question becomes: what can we learn from this data?”

That question has followed him ever since, through roles at GE, Quintiles, IBM Watson Health, and now Data Leaders Network. Along the way, Ed has watched AI go from a niche experiment to a headline feature, and understands how often people struggled to use it well.

He recently asked a senior leader at a major pharmaceutical company how much AI their teams were actually using.

“He told me, ‘Ed, I can’t even get my staff to use Microsoft Copilot,’” Reiner said. “They’re afraid to touch it. They don’t know how leadership will react to the results. That’s where the industry really is: big plans, big investments, and a workforce that isn’t sure how to bring AI into their everyday work.”

That gap, between ambition and practice, is what Data Leaders Network attempts to close. Rather than sending people to generic “AI in healthcare” workshops, the organization embeds support in real projects.

“Think about a health economist or epidemiologist with a PhD,” Reiner said. “They’re experts in outcomes research, but nobody has ever taught them how to use large language models or machine learning. Then suddenly someone says, ‘Use AI on this six-month FDA project.’ That’s a terrifying ask.”

In his current work, those experts come into a community where mentors with deep experience in both AI and life sciences help them tackle their assignments: designing a study, analyzing data, drafting a report.

“Someone might say, ‘I have to deliver a safety report on a new product in six months. I have a small budget and a huge dataset. I don’t even know where to begin.’ We don’t just lecture them about AI, we sit next to them (literally or figuratively) and work through it. You learn much faster when you’re applying these tools to the work you’re already responsible for.”

The scale of that work is hard to grasp. A single outcomes study might involve 20 years' worth of data on tens of millions of patients — diagnoses, prescriptions, hospital visits, lab results, imaging, sometimes genetic and biomarker information. These datasets reach multiple terabytes and grow every day.

“There is no way to analyze that kind of volume with traditional methods alone,” Reiner said. “AI isn’t an option at that point. It becomes the only way to study and notice certain patterns at all.” Those patterns underpin some of the most important questions in medicine: how to tailor treatments to individual patients, which therapies actually reduce hospitalizations, which interventions meaningfully lower the long-term burden of a disease.

“We talk about ‘burden of illness’ — the real cost of living with a condition,” he said. “Medication, hospitalizations, time off work, all of it. You might have a therapy that’s expensive up front, but if it prevents complications and hospital stays, the overall picture can change. AI doesn’t make these value judgments for us, but it gives us better evidence to argue from.”

Reiner is quick to point out that smarter tools don’t automatically mean smarter decisions, recalling a physician colleague who used ChatGPT to draft a medical manuscript for a peer-reviewed journal. “He was ready to submit it right away,” Reiner said. “I asked, ‘Don’t you think someone should read this before it goes to the editor?’ Medical language is subtle. These models can misinterpret things. Overconfidence in AI output is just as dangerous as ignoring AI completely.”

For him, that’s the heart of the challenge in 2026: not building one more model, but changing how people work with AI.

“Most organizations don’t have a roadmap,” he said. “They’re overwhelmed by the number of tools. ChatGPT, DeepSeek, Claude, Perplexity, Copilot, Gemini. The smart people are trying several. But there’s still no clear sense of which tool is right for which problem, or how to teach thousands of employees to use any of these models responsibly.”

His advice to students and early-career professionals is pragmatic: experiment, but stay critical. “Don’t be intimidated,” he said. “Play with these models. Learn how to ask them questions, see what they’re good at, and where they fall apart. But never say, ‘That’s what the model gave me, so it must be right.’ You still own the final result.”

When he comes back to Stony Brook now, Reiner can see both how much has changed and what has stayed constant. “Back then, the cutting edge here was finishing the buildings,” he joked. “Now you’ve got an AI Innovation Institute, collaborations across computer science, medicine, humanities, ethics, and policy. The campus is part of a global conversation about how AI and healthcare fit together, and in a way that actually serves patients, not just the technology itself.”

What hasn’t changed, in his view, is the need to keep humans firmly in the loop. “AI isn’t going to replace doctors, researchers, or analysts,” he said. “But it is going to be the backbone of analytics in healthcare. The question is whether we use it thoughtfully, with real human judgment, or let the technology run ahead of our understanding.”