Researchers have long recognised that for artificial intelligence to truly collaborate with people, it must accurately anticipate human intentions. Peter Zeng, Weiling Li, and Amie Paige, from Stony Brook University, alongside Zhengxiang Wang, Panagiotis Kaliosis, Dimitris Samaras et al, investigated how Large Visual Language Models (LVLMs) establish ‘common ground’ during communication , a fundamental aspect of human interaction. Their new study, detailed in a referential communication experiment, reveals a significant limitation in LVLMs’ ability to interactively resolve ambiguous references, using a unique dataset of 356 human and machine dialogues.

Meet Manas Singh, a junior majoring in Computer Science with a specialization in Artificial Intelligence and Data Science. He is currently an undergraduate researcher in the Language Understanding and Reasoning (LUNR) Lab under Dr. Niranjan Balasubramanian.

Stony Brook, NY, February 13, 2026 — In his office lined with hand-drawn diagrams and alphabet-like symbols, Stony Brook researcher Jeffrey Heinz is trying to answer a deceptively simple question: How well, exactly, can today’s neural networks learn, and where do they fail?

Dr. Manoj Mahajan, Director of Special Programs at Stony Brook University, warned in Nancy Guthrie's ransom note search that “a little bit of research” can mask an IP. The note suggests she may no longer be in Arizona. She was reported missing on Feb. 1, 2026.

Stony Brook University, along with the three other State University of New York (SUNY) university centers, will participate in Empire AI campus partnerships with other state colleges to advance artificial intelligence (AI) research and education for the public good.

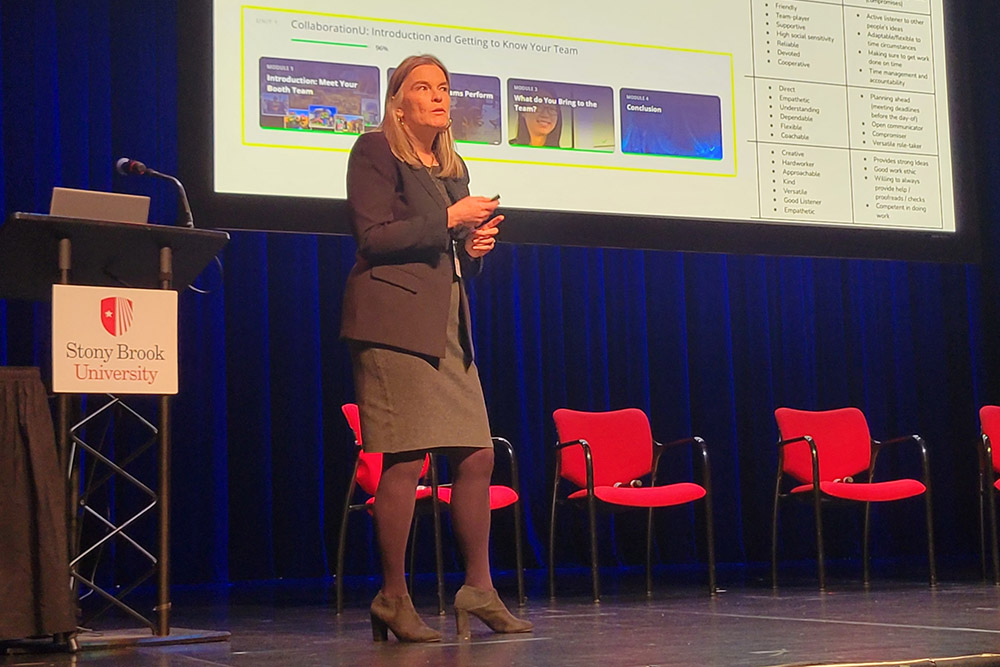

In the Charles B. Wang Center during the fall of 2025, a meeting of the Middle Atlantic section of the American Society for Engineering Education gathered to exchange ideas and information about the role of artificial intelligence in education.

SUNY Campuses will leverage Empire AI to increase research experiences, professional development, and other opportunities for SUNY students and faculty, furthering Governor Hochul's commitment to advancing AI usage for the public good.